MOD-15 is a self-initiated WebGL research project built to find out how far real-time 3D rendering can go inside a production Next.js app — without pre-renders, video files, or canvas hacks. Two distinct experiments, one shared architecture: scroll position as the single source of truth for everything on screen.

One Clock. Two Systems.

The core constraint was keeping the 3D scene and the HTML content perfectly synchronised without sacrificing native scroll performance. The solution: read window.scrollY directly inside useFrame on every render tick — no GSAP ScrollTrigger, no React state, no re-renders. Both systems share a single value, computed once per frame at the GPU clock rate.

Each 3D model is a live GLB processed entirely on the GPU via React Three Fiber. Bones, skinning weights, and animation data are all live — not baked. The walk cycle on the character model runs as a real skeletal animation, driven by the Three.js animation system, while simultaneously being displaced by a scroll-driven shader.

Custom GLSL Vertex Displacement

A custom GLSL vertex shader is injected at material compile time via onBeforeCompile — bypassing Three.js's standard material pipeline to modify the shader source directly. The displacement function implements 3D Simplex noise in GLSL, displacing each vertex along its normal vector by an amplitude that scales with scroll progress. As you scroll, the mesh tears apart vertex by vertex.

To avoid traversing the full scene graph on every frame, compiled shader uniform references are cached into a meshShadersRef array at setup time. The useFrame loop only iterates that cache — keeping per-frame cost O(n) against the number of meshes, not the scene hierarchy depth. The character is rendered in wireframe at 0.45 opacity, lime-tinted, making the displacement visible without a separate render pass.

Cinematic Camera Waypoint System

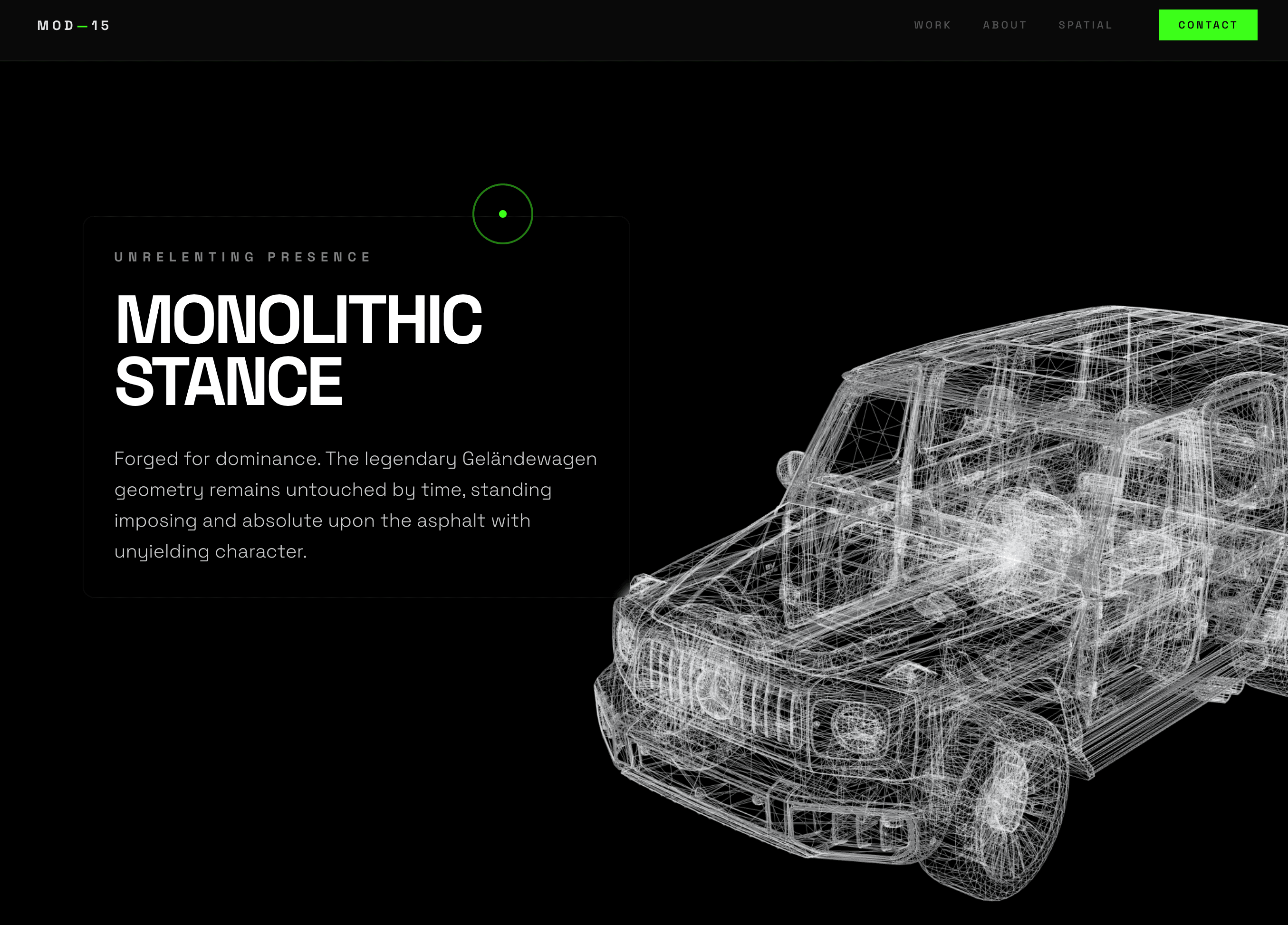

The G63 experiment renders a wireframe AMG G63 GLB with 8 pre-calculated camera positions — each one cinematically framed to a specific part of the car. As the user scrolls through 8 sections of editorial copy, the camera lerps between those exact positions and lookAt targets: front view, rear, interior through the window, low front grille, left diagonal, full side profile, rear vault, final front approach.

Camera position and lookAt are interpolated independently using THREE.MathUtils.lerp, giving the camera a physical feel — it arrives rather than snaps. The floor mesh is removed at load time by computing bounding box extents for each child mesh and culling anything with a near-zero Y extent, ensuring Center calculates true model bounds rather than inflating them with baked-in ground planes.